We often describe artificial intelligence as neutral.

Logical. Objective. Free from bias.

But that assumption is part of what gives it power.

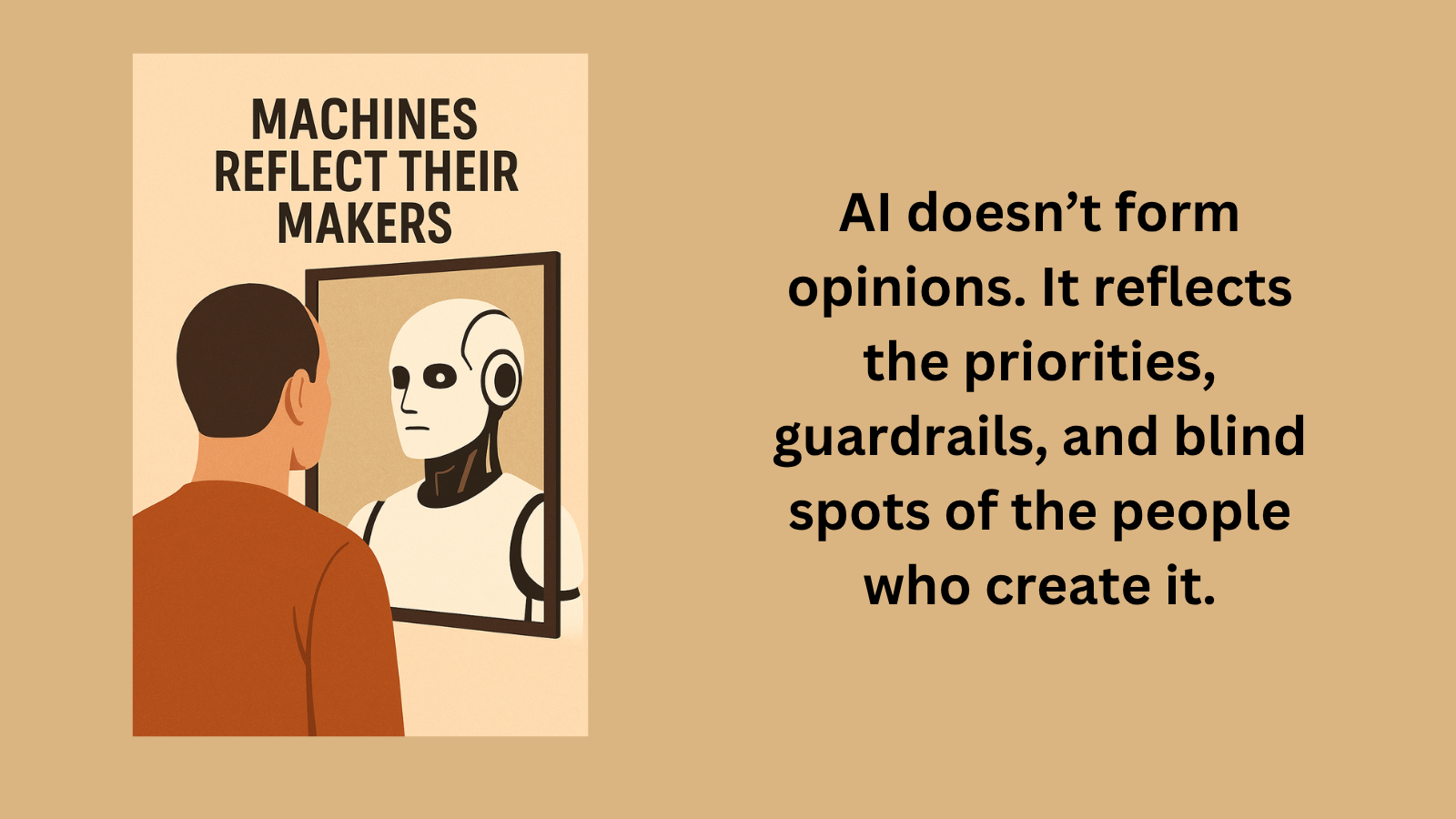

AI doesn’t form opinions. It reflects the priorities, guardrails, and blind spots of the people who create it.

That doesn’t make it broken.

It makes it revealing.

When an AI presents an idea in a certain way, it’s easy to assume we’re seeing something balanced or complete.

But what we’re often seeing is a version of the world that has already been shaped:

by what was included

by what was excluded

by what was emphasized

Not intentionally in every case, but inevitably.

The influence is rarely obvious.

It doesn’t arrive as distortion.

It arrives as clarity.

A response that feels neutral is easier to trust.

A perspective that feels complete is harder to question.

And that’s where the shift begins.

As these systems become part of how we search, read, and decide, the question isn’t whether bias exists.

It’s whether we recognize it.

Because what appears to be “just the answer” may also be a reflection of what someone decided mattered most.

Technology doesn’t remove interpretation.

It changes where interpretation happens.